Crypto markets are at a greater than expected risk, with seed phrases and wallet addresses likely falling prey to AI routing systems.

A new study from University of California researchers is raising serious concerns about how third-party AI routing services are built, and how they could be quietly exposing users to theft of crypto funds and sensitive login credentials.

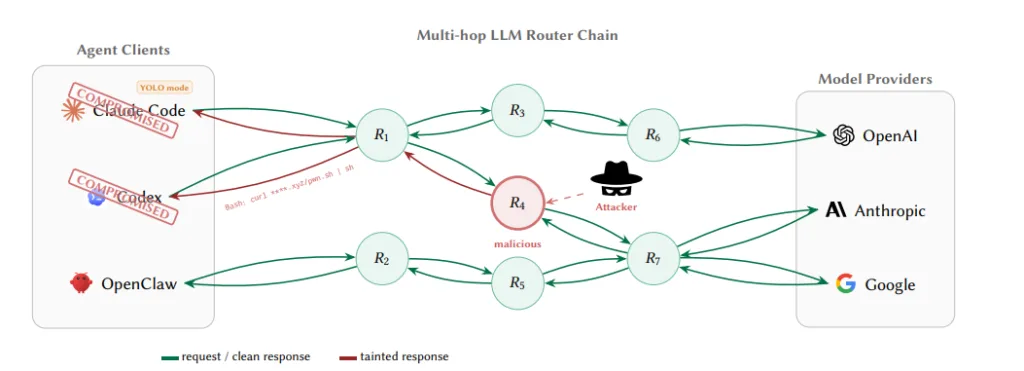

When viewed through the lens of the rising intersection between AI and crypto, LLM routers can be defined as software services used by third parties to mediate between AI agents and Large Language Model (LLM) vendors like OpenAI, Anthropic, and Google to improve requests made via API.

Instead of sending requests directly to the AI provider, the data is passed through a middle layer that manages traffic, cost, or multiple model access. The problem is that this middle layer sees everything in plain text before forwarding it anywhere.

That creates a major security blind spot. Anything sent through these routers, crypto seed phrases, private keys, cloud passwords, can potentially be read, stored, or even misused.

Additionally, because this information sits in the middle of the system, users usually have no way of knowing if something is being handled normally or being quietly stolen.

To test how real the risk is, researchers examined hundreds of the services, including 400 free and 28 paid routers. The results were worrying: some services were found injecting malicious code, while others tried to access sensitive cloud credentials like AWS keys.

Key findings of the experiment

In one controlled experiment, a compromised router even managed to drain a small amount of Ether from a test wallet after being given a private key. The researchers kept the loss under $50, but the point was clear: the method to steal user information to conduct crypto scams works.

What makes this even more concerning is how invisible the attack can be. From the outside, everything looks normal. The router is supposed to read the data anyway to forward it, so malicious activity blends in with legitimate processing.

The risk becomes even higher with automated AI systems that run in “YOLO mode,” where the AI can take actions without human approval. In those setups, a single injected instruction could potentially trigger transactions or leak credentials before anyone notices.

Trusted routers also fail the sincerity test

The researchers also warned that even trusted routers can become dangerous if they are compromised or poorly secured, since they continuously handle sensitive data.

Their main recommendation is simple: never let private keys or seed phrases pass through an AI system or routing layer at all. In the longer term, the research suggests the industry may need cryptographic verification systems so AI actions can be proven authentic and not tampered with in transit.

Until then, the study warns, these AI routing layers remain a hidden weak point in the system, one most users don’t even realize they’re trusting.